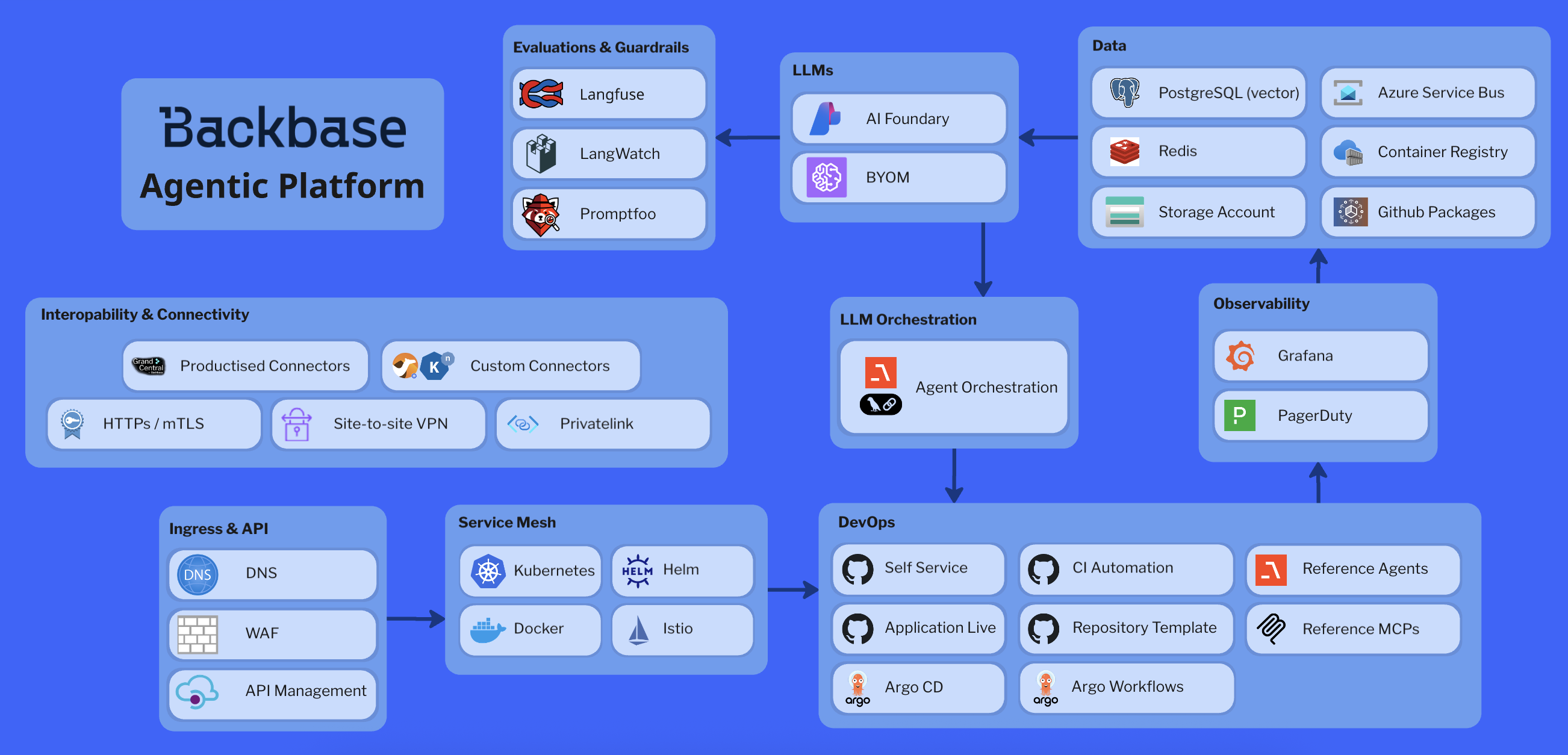

Architecture overview

Platform layers

The architecture diagram shows five foundational layers that work together:1. foundation (infrastructure)

1. foundation (infrastructure)

- Kubernetes: Container orchestration across any cloud provider

- Service Mesh: Istio for traffic management, security, and observability

- Container Runtime: Docker for containerization

- Packaging: Helm charts for deployment configuration

2. data

2. data

- Vector Storage: PostgreSQL with pgvector for embeddings and RAG

- Caching: Redis for session state and fast lookups

- Object Storage: Cloud-native storage for artifacts and models

- Message Bus: Azure Service Bus for async workflows

- Registries: Container registries (ACR/ECR/GCR) and package registries (GitHub Packages)

3. AI gateway

3. AI gateway

- Provider Abstraction: Unified interface to Azure AI Foundry, OpenAI, Gemini, Anthropic, or BYO models

- Policy Enforcement: Guardrails, rate limiting, cost management, and content safety

- Traffic Management: Semantic caching, load balancing, and intelligent request routing

- PII Protection: Automatic detection and sanitization

- SDK Access: BB AI SDK for standardized integration

4. agent runtime

4. agent runtime

- Agent Workloads: FastAPI-based APIs with background workers

- Orchestration: Agno and LangGraph for multi-step workflows

- MCP Integration: Model Context Protocol servers for tool access

- Banking Services: Pre-integrated domain services (deposits, payments, loans, fraud)

- Guardrails and Safety: Real-time evaluations, content safety filters, PII detection/sanitization, jailbreak prevention, prompt validation

- Observability: OpenTelemetry traces, Langfuse runs, metrics, and logs

5. control plane and ingress

5. control plane and ingress

- Ingress: API Management (APIM) with DNS, WAF, SSL termination

- GitOps: Argo CD for declarative, automated deployments

- CI/CD: Automated pipelines with PR checks, builds, and releases

- Self-Service: Repository provisioning and infrastructure automation

- Monitoring: Grafana dashboards, PagerDuty alerts, real-time evaluations

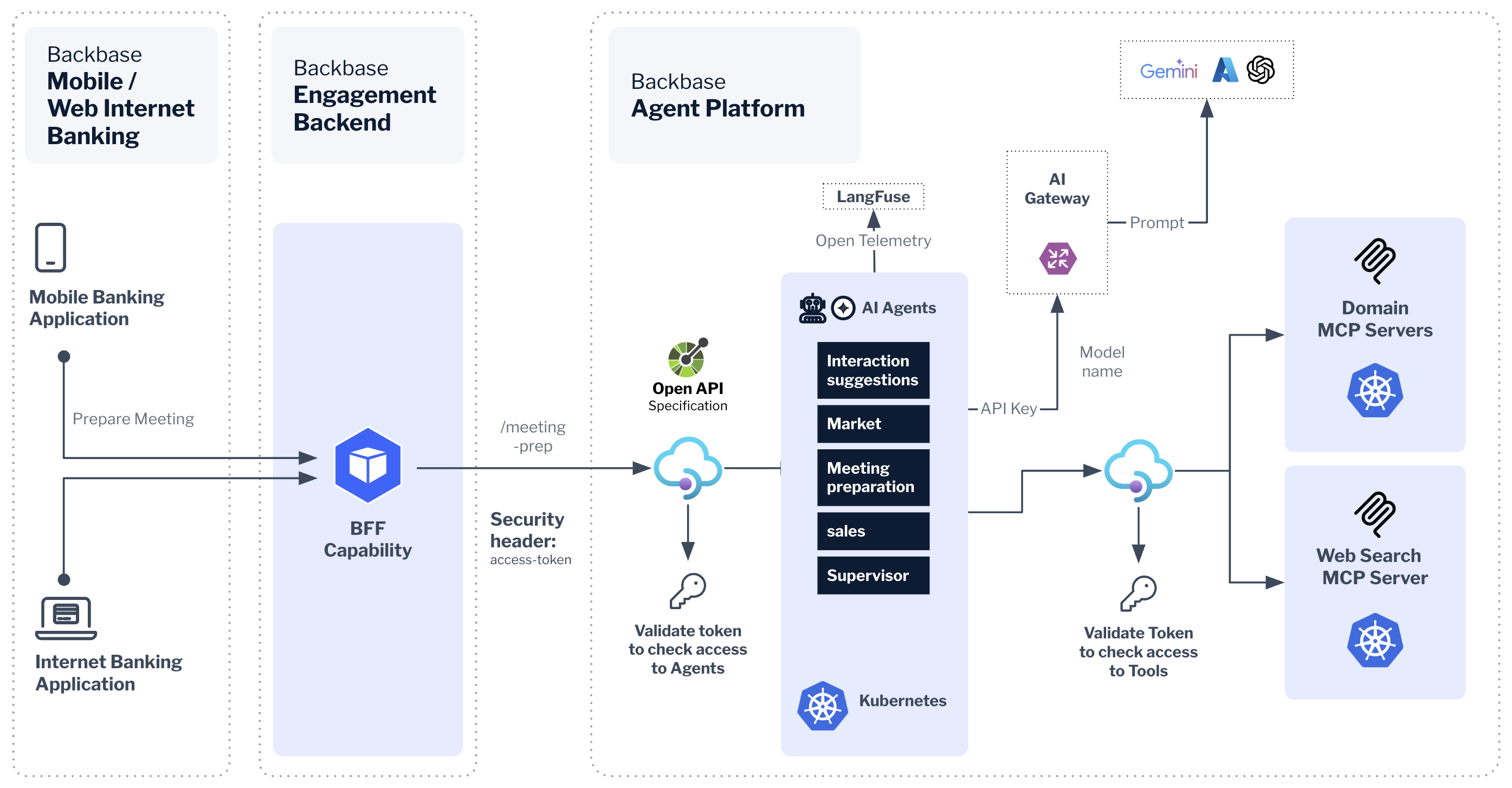

Runtime flow

- Client → APIM → Agent API: Request enters through API Management with authentication and rate limiting

- Agent → AI Gateway: Agent calls LLM through gateway with guardrails and traffic control applied

- AI Gateway → LLM Provider: Request routed to selected provider (Azure AI Foundry or BYO) with policies enforced

- Tool Execution: Agent calls MCP servers (banking domains) or direct REST/GraphQL APIs

- Observability: OTel traces and Langfuse runs capture every step; logs and metrics flow to platform sinks

- Real-time Evaluation: Automated evaluations flag risks (safety, PII, jailbreaks) and feed continuous improvements

Next steps

Technology stack

Explore the detailed technology stack and implementation deep dive

Getting started

Start building your first AI agent on the platform